Transformer——多头注意力机制(Pytorch)

创始人

2025-01-11 08:34:26

0次

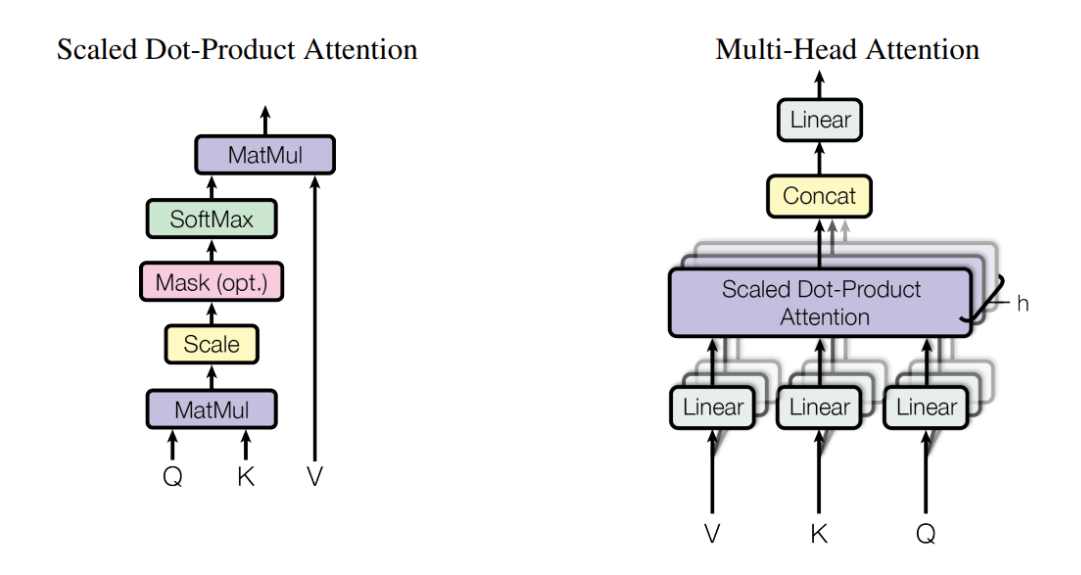

1. 原理图

2. 代码

import torch import torch.nn as nn class Multi_Head_Self_Attention(nn.Module): def __init__(self, embed_size, heads): super(Multi_Head_Self_Attention, self).__init__() self.embed_size = embed_size self.heads = heads self.head_dim = embed_size // heads self.queries = nn.Linear(self.embed_size, self.embed_size, bias=False) self.keys = nn.Linear(self.embed_size, self.embed_size, bias=False) self.values = nn.Linear(self.embed_size, self.embed_size, bias=False) self.fc_out = nn.Linear(self.embed_size, self.embed_size, bias=False) def forward(self,queries, keys, values, mask): N = queries.shape[0] # batch_size query_len = queries.shape[1] # sequence_length key_len = keys.shape[1] # sequence_length value_len = values.shape[1] # sequence_length queries = self.queries(queries) keys = self.keys(keys) values = self.values(values) # Split the embedding into self.heads pieces # batch_size, sequence_length, embed_size(512) --> # batch_size, sequence_length, heads(8), head_dim(64) queries = queries.reshape(N, query_len, self.heads, self.head_dim) keys = keys.reshape(N, key_len, self.heads, self.head_dim) values = values.reshape(N, value_len, self.heads, self.head_dim) # batch_size, sequence_length, heads(8), head_dim(64) --> # batch_size, heads(8), sequence_length, head_dim(64) queries = queries.transpose(1, 2) keys = keys.transpose(1, 2) values = values.transpose(1, 2) # Scaled dot-product attention score = torch.matmul(queries, keys.transpose(-2, -1)) / (self.head_dim ** (1/2)) if mask is not None: score = score.masked_fill(mask == 0, float("-inf")) # batch_size, heads(8), sequence_length, sequence_length attention = torch.softmax(score, dim=-1) out = torch.matmul(attention, values) # batch_size, heads(8), sequence_length, head_dim(64) --> # batch_size, sequence_length, heads(8), head_dim(64) --> # batch_size, sequence_length, embed_size(512) # 为了方便送入后面的网络 out = out.transpose(1, 2).contiguous().reshape(N, query_len, self.embed_size) out = self.fc_out(out) return out batch_size = 64 sequence_length = 10 embed_size = 512 heads = 8 mask = None Q = torch.randn(batch_size, sequence_length, embed_size) K = torch.randn(batch_size, sequence_length, embed_size) V = torch.randn(batch_size, sequence_length, embed_size) model = Multi_Head_Self_Attention(embed_size, heads) output = model(Q, K, V, mask) print(output.shape)

相关内容

热门资讯

办法辅助!闲玩暗宝辅助插件(辅...

办法辅助!闲玩暗宝辅助插件(辅助)都是有辅助方法(哔哩哔哩)1、这是跨平台的闲玩暗宝辅助插件轻量版有...

策略辅助!海贝之城正版辅助(辅...

策略辅助!海贝之城正版辅助(辅助)果然有辅助教程(哔哩哔哩)1、用户打开应用后不用登录就可以直接使用...

秘籍辅助!边锋老友辅助器(辅助...

秘籍辅助!边锋老友辅助器(辅助)本来真的有辅助脚本(哔哩哔哩)在进入边锋老友辅助器软件靠谱后,参与本...

资料辅助!福建大玩家万能辅助器...

资料辅助!福建大玩家万能辅助器(辅助)其实存在有辅助技巧(哔哩哔哩)1、进入到福建大玩家万能辅助器是...

步骤辅助!微信开心泉州有技巧吗...

步骤辅助!微信开心泉州有技巧吗(辅助)总是存在有辅助神器(哔哩哔哩)1、完成微信开心泉州有技巧吗辅助...

办法辅助!小白大作战辅助器(辅...

办法辅助!小白大作战辅助器(辅助)竟然有辅助神器(哔哩哔哩)1、该软件可以轻松地帮助玩家将小白大作战...

策略辅助!衢州都莱罗松辅助器(...

策略辅助!衢州都莱罗松辅助器(辅助)总是真的有辅助神器(哔哩哔哩)1、打开软件启动之后找到中间准星的...

经验辅助!九九山城麻将辅助器(...

经验辅助!九九山城麻将辅助器(辅助)竟然一直总是有辅助攻略(哔哩哔哩)1、九九山城麻将辅助器脚本辅助...

烘培辅助!微信小程序怎么挂脚本...

烘培辅助!微信小程序怎么挂脚本(辅助)真是是有辅助脚本(哔哩哔哩)1、实时微信小程序怎么挂脚本透视辅...

办法辅助!九游破解辅助插件教程...

办法辅助!九游破解辅助插件教程(辅助)本来有辅助方法(哔哩哔哩)1、首先打开九游破解辅助插件教程辅助...